本文将介绍这一细微差别。

我像往常一样开始硒,然后首先单击带有table斯坦共和国选举结果的所需表格所在的链接,然后崩溃

如您所知,细微差别在于,每次单击链接后,都会显示一个验证码。

通过分析站点的结构,发现链接数达到了约3万个。

我别无选择,只能在互联网上找到识别验证码的方法。找到一个服务

+验证码会像一个人一样被100%

识别-平均识别时间为9秒,这很长,因为我们有大约3万个不同的链接,我们需要关注并识别验证码。

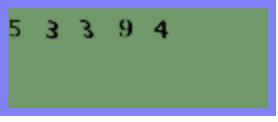

我立即放弃了这个想法。经过几次尝试获取验证码后,我注意到它并没有太大变化,绿色背景上的所有黑色数字均相同。

而且,由于我一直想用手触摸“视觉计算机”,因此我决定有机会亲自尝试每个人最喜欢的MNIST问题。

已经是17:00了,我开始寻找经过预训练的模型以识别数字。在此验证码上检查了它们之后,准确性令我不满意-好的,是时候收集图片并训练您的神经网络了。

首先,您需要收集培训样本。

我打开Chrome网络驱动程序,并在文件夹中显示1000个验证码。

from selenium import webdriver

i = 1000

driver = webdriver.Chrome('/Users/aleksejkudrasov/Downloads/chromedriver')

while i>0:

driver.get('http://www.vybory.izbirkom.ru/region/izbirkom?action=show&vrn=4274007421995®ion=27&prver=0&pronetvd=0')

time.sleep(0.5)

with open(str(i)+'.png', 'wb') as file:

file.write(driver.find_element_by_xpath('//*[@id="captchaImg"]').screenshot_as_png)

i = i - 1由于我们只有两种颜色,因此我将验证码转换为bw:

from operator import itemgetter, attrgetter

from PIL import Image

import glob

list_img = glob.glob('path/*.png')

for img in list_img:

im = Image.open(img)

im = im.convert("P")

im2 = Image.new("P",im.size,255)

im = im.convert("P")

temp = {}

#

for x in range(im.size[1]):

for y in range(im.size[0]):

pix = im.getpixel((y,x))

temp[pix] = pix

if pix != 0:

im2.putpixel((y,x),0)

im2.save(img)

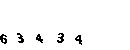

现在我们需要将验证码切成数字并将其转换为10 * 10的单个大小。

首先,我们将验证码切成数字,然后,由于验证码沿OY轴移动,因此我们需要裁剪所有不必要的内容并将图像旋转90°。

def crop(im2):

inletter = False

foundletter = False

start = 0

end = 0

count = 0

letters = []

name_slise=0

for y in range(im2.size[0]):

for x in range(im2.size[1]):

pix = im2.getpixel((y,x))

if pix != 255:

inletter = True

# OX

if foundletter == False and inletter == True:

foundletter = True

start = y

# OX

if foundletter == True and inletter == False:

foundletter = False

end = y

letters.append((start,end))

inletter = False

for letter in letters:

#

im3 = im2.crop(( letter[0] , 0, letter[1],im2.size[1] ))

# 90°

im3 = im3.transpose(Image.ROTATE_90)

letters1 = []

#

for y in range(im3.size[0]): # slice across

for x in range(im3.size[1]): # slice down

pix = im3.getpixel((y,x))

if pix != 255:

inletter = True

if foundletter == False and inletter == True:

foundletter = True

start = y

if foundletter == True and inletter == False:

foundletter = False

end = y

letters1.append((start,end))

inletter=False

for letter in letters1:

#

im4 = im3.crop(( letter[0] , 0, letter[1],im3.size[1] ))

#

im4 = im4.transpose(Image.ROTATE_270)

resized_img = im4.resize((10, 10), Image.ANTIALIAS)

resized_img.save(path+name_slise+'.png')

name_slise+=1

我想:“已经到了18:00,是时候解决这个问题了。”我想着,把文件夹中的数字和数字一路散开了。

我们声明一个简单的模型,该模型接受我们图片的扩展矩阵作为输入。

为此,请创建100个神经元的输入层,因为图片的大小为10 * 10。作为输出层,有10个神经元,每个神经元对应于0到9的数字。

from tensorflow.keras import Sequential

from tensorflow.keras.layers import Conv2D, MaxPooling2D, Flatten, Dense, Dropout, Activation, BatchNormalization, AveragePooling2D

from tensorflow.keras.optimizers import SGD, RMSprop, Adam

def mnist_make_model(image_w: int, image_h: int):

# Neural network model

model = Sequential()

model.add(Dense(image_w*image_h, activation='relu', input_shape=(image_h*image_h)))

model.add(Dense(10, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer=RMSprop(), metrics=['accuracy'])

return model

我们将数据分为训练集和测试集:

list_folder = ['0','1','2','3','4','5','6','7','8','9']

X_Digit = []

y_digit = []

for folder in list_folder:

for name in glob.glob('path'+folder+'/*.png'):

im2 = Image.open(name)

X_Digit.append(np.array(im2))

y_digit.append(folder)我们将其分为训练和测试集:

from sklearn.model_selection import train_test_split

X_Digit = np.array(X_Digit)

y_digit = np.array(y_digit)

X_train, X_test, y_train, y_test = train_test_split(X_Digit, y_digit, test_size=0.15, random_state=42)

train_data = X_train.reshape(X_train.shape[0], 10*10) # 100

test_data = X_test.reshape(X_test.shape[0], 10*10) # 100

# 10

num_classes = 10

train_labels_cat = keras.utils.to_categorical(y_train, num_classes)

test_labels_cat = keras.utils.to_categorical(y_test, num_classes)

我们训练模型。

凭经验选择时期数和“批量”大小的参数:

model = mnist_make_model(10,10)

model.fit(train_data, train_labels_cat, epochs=20, batch_size=32, verbose=1, validation_data=(test_data, test_labels_cat))

我们保存权重:

model.save_weights("model.h5")

在第11个时代,精度非常好:精度= 1.0000。满意的是,我将于19:00回家休息,明天我仍然需要编写一个解析器来从CEC网站上收集信息。

第二天早上。

问题仍然很小,它仍然需要浏览CEC网站上的所有页面并获取数据:

加载经过训练的模型的权重:

model = mnist_make_model(10,10)

model.load_weights('model.h5')

我们编写一个函数来保存验证码:

def get_captcha(driver):

with open('snt.png', 'wb') as file:

file.write(driver.find_element_by_xpath('//*[@id="captchaImg"]').screenshot_as_png)

im2 = Image.open('path/snt.png')

return im2

让我们编写一个验证码预测功能:

def crop_predict(im):

list_cap = []

im = im.convert("P")

im2 = Image.new("P",im.size,255)

im = im.convert("P")

temp = {}

for x in range(im.size[1]):

for y in range(im.size[0]):

pix = im.getpixel((y,x))

temp[pix] = pix

if pix != 0:

im2.putpixel((y,x),0)

inletter = False

foundletter=False

start = 0

end = 0

count = 0

letters = []

for y in range(im2.size[0]):

for x in range(im2.size[1]):

pix = im2.getpixel((y,x))

if pix != 255:

inletter = True

if foundletter == False and inletter == True:

foundletter = True

start = y

if foundletter == True and inletter == False:

foundletter = False

end = y

letters.append((start,end))

inletter=False

for letter in letters:

im3 = im2.crop(( letter[0] , 0, letter[1],im2.size[1] ))

im3 = im3.transpose(Image.ROTATE_90)

letters1 = []

for y in range(im3.size[0]):

for x in range(im3.size[1]):

pix = im3.getpixel((y,x))

if pix != 255:

inletter = True

if foundletter == False and inletter == True:

foundletter = True

start = y

if foundletter == True and inletter == False:

foundletter = False

end = y

letters1.append((start,end))

inletter=False

for letter in letters1:

im4 = im3.crop(( letter[0] , 0, letter[1],im3.size[1] ))

im4 = im4.transpose(Image.ROTATE_270)

resized_img = im4.resize((10, 10), Image.ANTIALIAS)

img_arr = np.array(resized_img)/255

img_arr = img_arr.reshape((1, 10*10))

list_cap.append(model.predict_classes([img_arr])[0])

return ''.join([str(elem) for elem in list_cap])

添加一个下载表格的功能:

def get_table(driver):

html = driver.page_source #

soup = BeautifulSoup(html, 'html.parser') # " "

table_result = [] #

tbody = soup.find_all('tbody') #

list_tr = tbody[1].find_all('tr') #

ful_name = list_tr[0].text #

for table in list_tr[3].find_all('table'): #

if len(table.find_all('tr'))>5: #

for tr in table.find_all('tr'): #

snt_tr = []#

for td in tr.find_all('td'):

snt_tr.append(td.text.strip())#

table_result.append(snt_tr)#

return (ful_name, pd.DataFrame(table_result, columns = ['index', 'name','count']))

我们收集了9月13日的所有链接:

df_table = []

driver.get('http://www.vybory.izbirkom.ru')

driver.find_element_by_xpath('/html/body/table[2]/tbody/tr[2]/td/center/table/tbody/tr[2]/td/div/table/tbody/tr[3]/td[3]').click()

html = driver.page_source

soup = BeautifulSoup(html, 'html.parser')

list_a = soup.find_all('table')[1].find_all('a')

for a in list_a:

name = a.text

link = a['href']

df_table.append([name,link])

df_table = pd.DataFrame(df_table, columns = ['name','link'])

在13:00之前,我将遍历所有页面完成编写代码:

result_df = []

for index, line in df_table.iterrows():#

driver.get(line['link'])#

time.sleep(0.6)

try:#

captcha = crop(get_captcha(driver))

driver.find_element_by_xpath('//*[@id="captcha"]').send_keys(captcha)

driver.find_element_by_xpath('//*[@id="send"]').click()

time.sleep(0.6)

true_cap(driver)

except NoSuchElementException:#

pass

html = driver.page_source

soup = BeautifulSoup(html, 'html.parser')

if soup.find('select') is None:#

time.sleep(0.6)

html = driver.page_source

soup = BeautifulSoup(html, 'html.parser')

for i in range(len(soup.find_all('tr'))):#

if '\n \n' == soup.find_all('tr')[i].text:# ,

rez_link = soup.find_all('tr')[i+1].find('a')['href']

driver.get(rez_link)

time.sleep(0.6)

try:

captcha = crop(get_captcha(driver))

driver.find_element_by_xpath('//*[@id="captcha"]').send_keys(captcha)

driver.find_element_by_xpath('//*[@id="send"]').click()

time.sleep(0.6)

true_cap(driver)

except NoSuchElementException:

pass

ful_name , table = get_table(driver)#

head_name = line['name']

child_name = ''

result_df.append([line['name'],line['link'],rez_link,head_name,child_name,ful_name,table])

else:# ,

options = soup.find('select').find_all('option')

for option in options:

if option.text == '---':#

continue

else:

link = option['value']

head_name = option.text

driver.get(link)

try:

time.sleep(0.6)

captcha = crop(get_captcha(driver))

driver.find_element_by_xpath('//*[@id="captcha"]').send_keys(captcha)

driver.find_element_by_xpath('//*[@id="send"]').click()

time.sleep(0.6)

true_cap(driver)

except NoSuchElementException:

pass

html2 = driver.page_source

second_soup = BeautifulSoup(html2, 'html.parser')

for i in range(len(second_soup.find_all('tr'))):

if '\n \n' == second_soup.find_all('tr')[i].text:

rez_link = second_soup.find_all('tr')[i+1].find('a')['href']

driver.get(rez_link)

try:

time.sleep(0.6)

captcha = crop(get_captcha(driver))

driver.find_element_by_xpath('//*[@id="captcha"]').send_keys(captcha)

driver.find_element_by_xpath('//*[@id="send"]').click()

time.sleep(0.6)

true_cap(driver)

except NoSuchElementException:

pass

ful_name , table = get_table(driver)

child_name = ''

result_df.append([line['name'],line['link'],rez_link,head_name,child_name,ful_name,table])

if second_soup.find('select') is None:

continue

else:

options_2 = second_soup.find('select').find_all('option')

for option_2 in options_2:

if option_2.text == '---':

continue

else:

link_2 = option_2['value']

child_name = option_2.text

driver.get(link_2)

try:

time.sleep(0.6)

captcha = crop(get_captcha(driver))

driver.find_element_by_xpath('//*[@id="captcha"]').send_keys(captcha)

driver.find_element_by_xpath('//*[@id="send"]').click()

time.sleep(0.6)

true_cap(driver)

except NoSuchElementException:

pass

html3 = driver.page_source

thrid_soup = BeautifulSoup(html3, 'html.parser')

for i in range(len(thrid_soup.find_all('tr'))):

if '\n \n' == thrid_soup.find_all('tr')[i].text:

rez_link = thrid_soup.find_all('tr')[i+1].find('a')['href']

driver.get(rez_link)

try:

time.sleep(0.6)

captcha = crop(get_captcha(driver))

driver.find_element_by_xpath('//*[@id="captcha"]').send_keys(captcha)

driver.find_element_by_xpath('//*[@id="send"]').click()

time.sleep(0.6)

true_cap(driver)

except NoSuchElementException:

pass

ful_name , table = get_table(driver)

result_df.append([line['name'],line['link'],rez_link,head_name,child_name,ful_name,table])

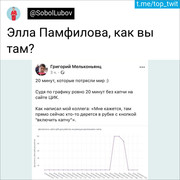

然后是改变我生活的推文